The Harvard Business Review provides some fundamental tips and practices for leading with AI for business managers.

Apply AI well

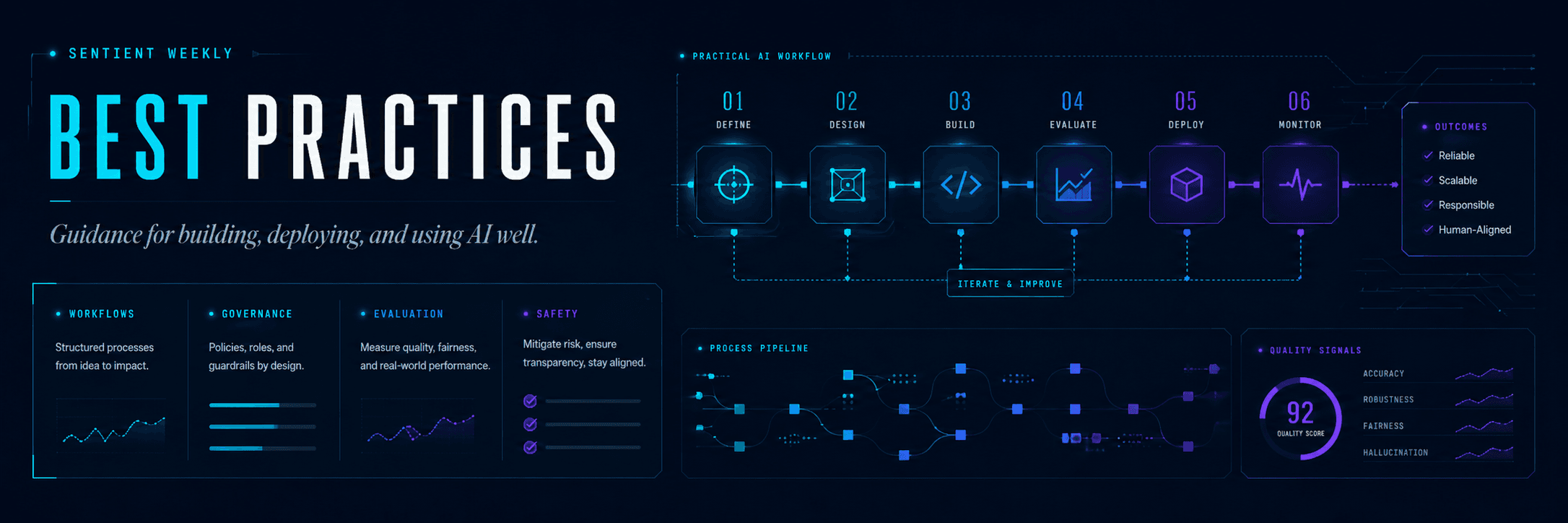

Field-tested guidance for working with AI: leadership patterns, daily workflows, and the books worth reading right now.

Five prompting styles were evaluated against an identical task: fix eight planted bugs in a small Express API. Each style was run three times. Outputs were scored on correctness (40%), security (15%), restraint (15%), code quality (20%, via two independent blind LLM judges), and cost (10%).

Enterprise AI adoption is accelerating rapidly, driven by advances in generative AI. These models learn from data, change as inputs change and advance with training techniques and datasets. But even as models become more sophisticated and accurate, state-of-the-art LLMs and AI projects still pose enterprise risks, especially when deployed in production. The impact is that AI governance is more important than ever and companies must have a robust and multifaceted strategy in place.

Large language models are becoming increasingly capable of handling complex, multi-step tasks. Advances in reasoning, multimodality, and tool use have unlocked a new category of LLM-powered systems known as agents.

Create, manage, and share skills to extend Claude’s capabilities in Claude Code. Includes custom commands and bundled skills.

Build your skills through written guides, and video lessons covering everything from quick tasks to complex workflows and best practices.

Developers are not just looking for prompts; they want better ways to structure agent behavior, reduce debugging time, improve consistency, and make these tools more effective on complex projects. In this article, we will look at 10 GitHub repositories that can help you do exactly that.

Through this analysis, we assembled a definition of sophisticated use that builds on prompt engineering—prioritizing clear prompts along with deliberately applied strategies—and discovered a set of low-cost, observable indicators such as model switching and structured initial prompts that predict high-level use. These insights are being integrated into KPMG’s talent, learning, and performance systems, and they offer a framework any organization can use to cultivate and measure more sophisticated AI use by their employees.

Even though large language models (LLMs) are typically used for boxed, archetypal roles like "writing email messages" or "acting as advanced search engines", they have a lot of hidden potential. It is just a matter of uncovering their hidden potential for creative problem-solving and expanding it into lesser-explored terrains.

Don't take your legacy processes and use AI to simply digitize them. Ai is an opportunity to reimagine HOW you do business and IMPROVE the way you work.

As AI-powered tools become increasingly prevalent, prompt engineering is becoming a skill that developers need to master. Large language models (LLMs) and other generative foundation models require contextual, specific, and tailored natural language instructions to generate the desired output. This means that developers need to write prompts that are clear, concise, and informative. In this blog, we will explore six best practices that will make you a more efficient prompt engineer. By following our advice, you can begin creating more personalized, accurate, and contextually aware applications. So let's get started!

Whether you’re just starting to engage or consider yourself to be an AI power user, here are 17 best practices to help you use AI with creativity, clarity, and confidence