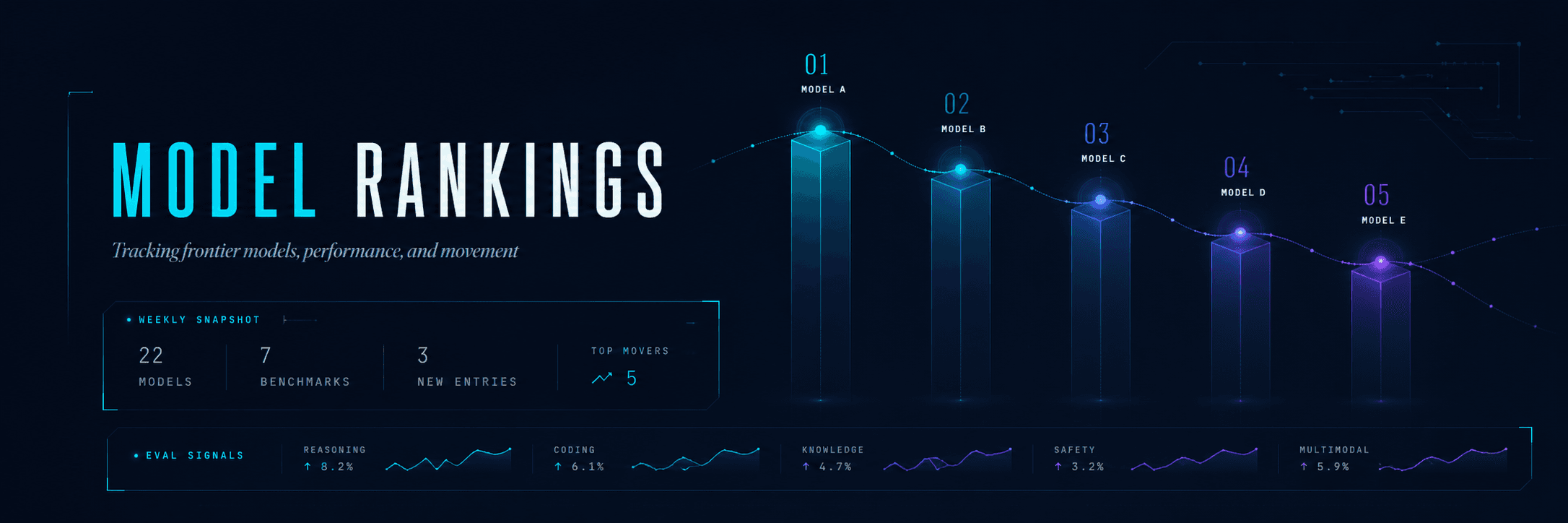

LLM rankings, side by side

Benchmarks, cost vs intelligence, context windows, and head-to-head comparisons across every major large language model.

Six lenses on the LLM field. Composite ranks for the headline answer; cost-curves and the heatmap for the nuance. Where you start depends on what you’re optimizing for.

Best value: high intelligence, low cost

Bubble size = context window. Best-value quadrant highlighted.

Average of AA’s same-scale intelligence indices (Overall and Coding) per model. Math is shown side-by-side at right but excluded from this rank — its 80–99 distribution would distort the average against models AA hasn’t scored on math yet.

The three intelligence indices Artificial Analysis publishes for every LLM, side-by-side for the top models. Missing values shown as —.

Math shows as —when AA hasn’t run the underlying math benchmarks (AIME, MATH-500…) against a given variant — common for high-effort reasoning configurations.

Bubble size = intelligence. Bottom-right is the operational sweet spot: fast responses for low cost.

Per-benchmark scores across the most-populated columns. Each column is normalized independently so different scales (MMLU 0-100 vs AIME 0-30) compare cleanly.

| Model | Overall n=12 | Coding n=12 | GPQA n=12 | HLE n=12 | IFBench n=12 | LCR n=12 | SciCode n=12 | Terminal-Bench (Hard) n=12 | τ²-Bench n=12 | Creative n=2 |

|---|---|---|---|---|---|---|---|---|---|---|

GPT-5.5 (xhigh) OpenAI | 60.2 | 59.1 | 0.94 | 0.44 | 0.76 | 0.74 | 0.56 | 0.61 | 0.94 | — |

Claude Opus 4.7 (Adaptive Reasoning, Max Effort) Anthropic | 57.3 | 52.5 | 0.91 | 0.40 | 0.59 | 0.70 | 0.55 | 0.52 | 0.89 | — |

Gemini 3.1 Pro Preview Google DeepMind | 57.2 | 55.5 | 0.94 | 0.45 | 0.77 | 0.73 | 0.59 | 0.54 | 0.96 | 8.50 |

GPT-5.5 (medium) OpenAI | 56.7 | 56.2 | 0.93 | 0.41 | 0.71 | 0.72 | 0.54 | 0.58 | 0.92 | — |

MiMo-V2.5-Pro | 53.8 | 45.5 | 0.87 | 0.34 | 0.80 | 0.73 | 0.50 | 0.43 | 0.94 | — |

Claude Opus 4.6 (Adaptive Reasoning, Max Effort) Anthropic | 53.0 | 48.1 | 0.90 | 0.37 | 0.53 | 0.71 | 0.52 | 0.46 | 0.92 | — |

Muse Spark | 52.1 | 47.5 | 0.88 | 0.40 | 0.76 | 0.70 | 0.52 | 0.46 | 0.92 | — |

Qwen3.6 Max Preview | 51.8 | 44.9 | 0.89 | 0.29 | 0.77 | 0.70 | 0.47 | 0.44 | 0.96 | — |

Claude Sonnet 4.6 (Adaptive Reasoning, Max Effort) Anthropic | 51.7 | 50.9 | 0.88 | 0.30 | 0.57 | 0.71 | 0.47 | 0.53 | 0.76 | 8.70 |

GPT-5.5 (low) OpenAI | 50.8 | 52.1 | 0.91 | 0.31 | 0.64 | 0.72 | 0.52 | 0.52 | 0.84 | — |

Claude Opus 4.5 (Reasoning) Anthropic | 49.7 | 47.8 | 0.87 | 0.28 | 0.58 | 0.74 | 0.50 | 0.47 | 0.90 | — |

GPT-5.4 mini (xhigh) OpenAI | 48.9 | 51.5 | 0.88 | 0.27 | 0.73 | 0.69 | 0.50 | 0.52 | 0.83 | — |

Estimate your monthly cost across models given your usage.

Sources: artificialanalysis.ai, LMSYS Chatbot Arena, Stanford HELM, official model documentation. Composite scores updated when new benchmarks publish. Read our methodology →

Rankings data by Artificial Analysis. CSV imports cover supplementary benchmarks.